In the last 18 years, 4,535 posts and 3,263,700 words (yes, really, I got a plugin to count them and everything), I have never once felt the need to outsource my thinking and creativity to a machine. There are two posts written by "guest authors" (which, spoiler, were actually both me in a cunning disguise!) and there are a couple of posts where I permitted drunken friends the opportunity to contribute a sentence or two to a post I was writing while out and about, but the remainder is all me, scooping out the contents of my brain and plopping it onto the page for no other reason than the fact that I enjoy doing so, and occasionally find it helpful.

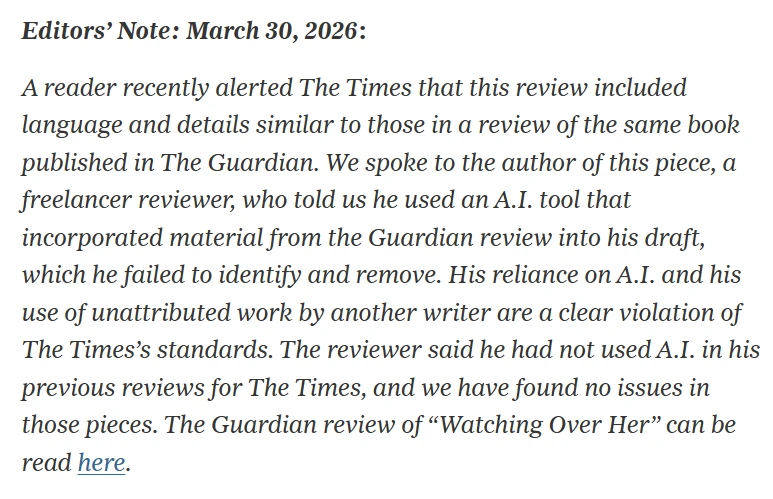

Today, this notice appeared in the New York Times on a book review it had published:

This, to me, is unforgivable. Supposedly there are plenty of writers out there who are doing this — or something like it, anyway — but to me, it is unfathomably awful. To be a writer, someone who cares about one's craft, you have to give a shit. And absolutely nothing says "I don't give a shit" quite like relying on generative AI so heavily that your article has to be pulled because its plagiarism was too obvious.

I mean, when you think about it, it's obvious that this would happen, given the way generative AI works and is trained — if it's pulling all its wording from existing texts that it has absorbed (without any compensation for the original authors) from around the Web, then of course it's going to come up with some of the same things, perhaps even the exact same phrasing.

You'd think it would be obvious, anyway — and that any writer worth their salt would not, as a result, rely on it — but apparently this is not the case. Much how the above-linked Wired article should really result in all the authors named being blacklisted from every freelance writing pool, effective immediately, this incident should be the end of Alex Preston's career. There should be no second chances. To quote the old Batman meme, this is the weapon of the enemy; we do not need it; we will not use it.

Believe me, at this point I've heard every pro-AI argument there is — some, like the nonsensical "back in the '90s some people thought the Internet would be a bad thing!!" one, more than others — and none of them stand up to the slightest bit of scrutiny. AI does not make you a better writer. AI does not make you a writer. The only thing that makes you a writer is, quite simply, writing. And if you are not sitting down and writing something for yourself — whether that be through putting pen to paper, tapping away at a keyboard or dictating your words verbally — you are not a writer. And no, "writing" your prompt to get the bot to churn out a thousand words for you does not count.

Humanity's written languages have survived for thousands of years — albeit with plenty of evolution — through people being taught how to use them. It is, today, a fundamental part of your early socialisation process to learn how to read and write; yes, some folks have specific learning needs that make it harder or even impossible for them to do so, but even for them, generative AI is emphatically not the answer, as we have plenty of assistive methodology and technology that can allow these people to thrive that does not rely on the odious fad that is presently bleeding the planet dry.

So I'm sorry, I have no patience left whatsoever for any incidents like this. The people involved in the Wired and New York Times articles above deserve to be kicked out of their career. Because if they have no respect for writing as a craft, why on Earth should any readers be expected to have any respect whatsoever for the shit they've churned out through the bots?

There are myriad people out there who would chew off their own arm for an opportunity to have a byline beneath a prestigious masthead — and every one of them who relies entirely on their own writing abilities, rather than outsourcing their creative process to the planet-burning chatbot, deserves those opportunities a million times more than those who clearly have no respect for themselves, their peers, or their readership.

Want to read my thoughts on various video games, visual novels and other popular culture things? Stop by MoeGamer.net, my site for all things fun where I am generally a lot more cheerful. And if you fancy watching some vids on classic games, drop by my YouTube channel.

If you want this nonsense in your inbox every day, please feel free to subscribe via email. Your email address won't be used for anything else.