There was a good piece by 404 Media on "AI slop" today. Author Jason Koebler described the issue as AI slop being a "brute force attack on the algorithms that control reality", and goes on to explain how those taking advantage of AI are exploiting social media algorithms to such a degree that platforms are now flooded with this garbage, making it hard to find 1) anything made by a real person and 2) anything made by someone you might actually want to connect with.

There is zero value to this stuff, other than self-fulfilling engagement. Presumably the long game is to build up "the numbers" with this shit, then sell the accounts, or make bank off impressions-based ad revenue. And the platform holders don't give a shit; as Koebler points out in his piece, it seems like Mark Zuckerberg actively wants the experience on Facebook to be real humans arguing over AI-generated slop rather than anything real and meaningful.

And I don't understand why we're letting this happen. Not only on social media, but in more "traditional" industries, too. It's happening to a frightening degree in publishing, with myriad "get rich quick" schemes fundamentally being based on churning out multiple AI-generated books every week (or even day) and then profiting off, let's face it, vulnerable people who aren't able to tell the difference between garbage churned out by a robot and something written by an actual human being.

As Koebler puts it, "there is a dual problem with this: it not only floods the Internet with shit, crowding out human-created content that real people spend time making, but the very nature of AI slop means it evolves faster than human-created content can, so any time an algorithm is tweaked, the AI spammers can find the weakness in that algorithm and exploit it."

At the moment, there are a few common responses to generative AI:

- "I love generative AI! The genie is out of the bottle, so if you're resisting it you're a Luddite who isn't embracing the latest technological innovations!"

- "Generative AI is just a tool that people can add to their arsenal, like digital art packages. I can't really tell you how or why that's a good thing, but I heard someone else say it so I'm saying it too."

- "Generative AI might be useful in certain circumstances, but I can't really tell you what they are because no-one really knows or can offer specific, concrete examples that aren't prone to hallucinations to such a degree to make them worthless."

- "Generative AI sucks balls and I hate it."

I'm somewhere towards the bottom of that list, leaning towards hating it and very much wanting it to go away. At present, I am disinclined to trust the people who claim it will be "revolutionary" for things like medicine, because of the amount of times it fucks simple things up, still. I am also concerned for the field of programming, because as more and more junior coders show up who are only capable of feeding prompts into an AI, not actually doing (and checking!) the coding themselves, we're going to have a real problem on our hands with software development.

At the same time, I'm sure there are some worthwhile use cases for a means of communicating with a computer using natural language. I mean, hell, look at Star Trek; the assumption there was that you could just say "Computer" like you say "Alexa" today, then rattle off an often fairly abstract task for it to complete, and it would do it. That is, presumably, the goal.

But AI isn't there yet, not by a long shot, which is why ChatGPT costs $200 a month for a subscription and can't really tell you what it's for, let alone how to stop it making stupid mistakes, and in the meantime the companies involved in all this shit are burning through both money and the planet's natural resources in pursuit of something which might, in fact, be impossible. "Agents" are coming, apparently, but all we've seen of them so far is making things that are already pretty straightforward to do on the Web (like grocery shopping) actively more cumbersome, and OpenAI's "deep research" tool is utterly laughable at this point, pulling out citation-free forum posts and SEO-optimised slop ahead of actual, worthwhile information written (and reviewed) by humans.

You, reading this, almost certainly know all this, and perhaps you've even read or shared some articles talking about the problems with AI slop and the problems that is causing all over the Internet. But what have you done about it? Because I feel like we should be doing more about it, rather than just pointing and tutting at it, going "whoo, lad, that generative AI sure is a bit shit, isn't it? Someone should do something about it."

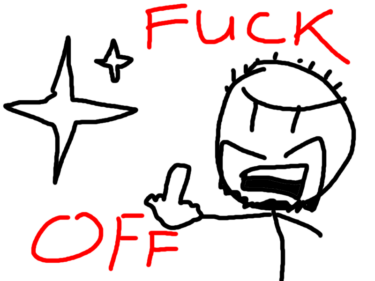

The trouble, of course, is that it's difficult to do anything meaningful about it, particularly when big corporate entities like Microsoft are the ones forcing it onto people through things people use every day like Windows, Office 365, and even the bloody Xbox. I mean, sure, you can find ways to disable it when it does show up, but these workarounds often end up circumvented by the corporations, meaning you need to faff around even more to get rid of the shit. And sure, you can install Linux, but that carries its own burden of needing to know how to do that. Which you and I might be comfortable doing, but what about people who use computers more casually; those who don't know how they work, but just want to be able to get on with simple tasks without intrusive AI features popping up every few seconds?

All we can do, really, is make a specific effort not to use generative AI tools when there are other alternatives available. I will never, ever use generative AI on this site, MoeGamer or my YouTube channel to produce words, scripts, images, thumbnails or videos, however tempting it might be as a "quick fix" to get something done. If that means there are things I either can't do or would have to pay a specialist to be able to do, I will either go without the thing or pay a specialist. Or perhaps even learn how to do the thing myself.

That's a crucial one, I think. Over the years, I've learned how to do a lot of things on computers simply by running into an issue I don't know how to solve, researching it myself and learning how to deal with it. Some of that knowledge I've retained, some of it fell out of my brain the moment I finished using it, but on the whole I've had a net gain on knowledge simply through running into problems and taking the initiative to learn how to fix them myself. I suspect many people who grew up with computers throughout the '80s and '90s are the same.

I'm not going to tell you what to do. But I am going to tell you what I'm doing:

- I will not use ChatGPT to research anything, when perfectly good information is available through well-established, reliable, trustworthy and peer-reviewed sources both online and offline.

- I will not use AI image or video generation for anything, period. If I need an image or video of something, I will produce it myself, search for a usable (and suitably licensed) stock or otherwise publicly available image or video, providing credit where appropriate, or just not use that image or video.

- I will not use AI voice generation to make a "famous" voice say something it never said. Even if it's really funny. I will freely admit to having done this in the past (only among friends), but that was before we really knew or understood the numerous negative impacts that generative AI has on both the environment and on culture.

- I will not use AI to create content for the sake of content. I write here because I like writing. I write on MoeGamer because I like writing about games. I make videos because I like making videos. I am not entitled to a "share" of the Internet based on the volume of stuff I churn out, nor am I entitled to be able to make a living from it. I will not pollute the Internet with meaningless slop.

Someday, there may be a valid use case for generative AI. I am open to that. Right now, I do not believe that is there, and I believe the continued proliferation of generative AI online is actively harmful to the Internet specifically, and human culture more broadly.

It needs to stop. But I'm concerned the "genie in a bottle" people are right, and that now we've started this process of enshittifying the entire Internet, we can't stop it again.

But we can make our own little corners of the Internet a safe haven away from the deluge of sewage. And that's what I'll continue to do.

Want to read my thoughts on various video games, visual novels and other popular culture things? Stop by MoeGamer.net, my site for all things fun where I am generally a lot more cheerful. And if you fancy watching some vids on classic games, drop by my YouTube channel.

If you want this nonsense in your inbox every day, please feel free to subscribe via email. Your email address won't be used for anything else.

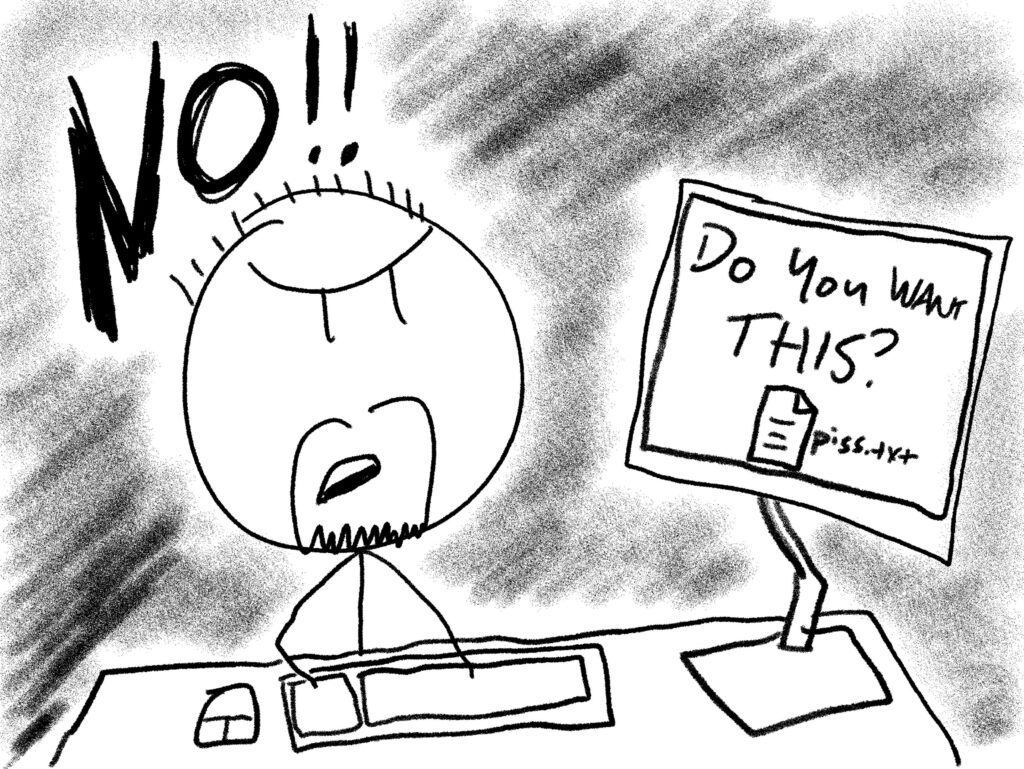

Every time there is a "new advance" in AI for video games, the first question a lot of people ask is "how human is it?" How does it compare to playing against a real, actual, human person? A gaming-related Turing Test, if you will. And the answer is always "it's not very human". There's one reason for this – computers can't be assholes.

Every time there is a "new advance" in AI for video games, the first question a lot of people ask is "how human is it?" How does it compare to playing against a real, actual, human person? A gaming-related Turing Test, if you will. And the answer is always "it's not very human". There's one reason for this – computers can't be assholes.